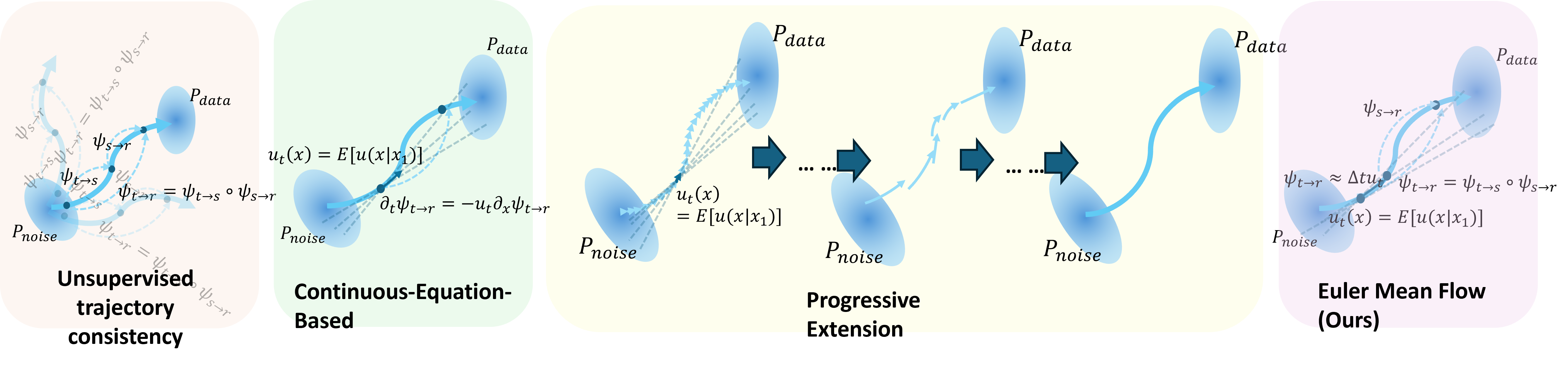

Progressive extension

ShortCut, PSD, and SplitMeanFlow extend short maps to long

maps through consistency. Their long maps are learned from

model outputs, not direct dataset supervision.

\[

\left\|

u^\theta_{t\to t}(x)

-

u_1(x\mid x_1)

\right\|^2,\qquad

u_1(x\mid x_1)=\frac{x_1-x}{1-t},\;x_1\in\mathcal D

\]

\[

\left\|

u^\theta_{t\to t+2d}(x_t)

-

\frac{1}{2}

\left[

u^\theta_{t\to t+d}(x_t)

+

u^\theta_{t+d\to t+2d}(x_{t+d})

\right]

\right\|^2

\]

Thus \(u^\theta_{t\to t+2d}(x)\) is extended from previous

model predictions, so long maps get no direct supervision

from \(\mathcal D\).

Continuous methods

MeanFlow, ESD, and LSD use data-based conditional velocities

for direct supervision, but require JVPs, which hurts memory,

speed, stability, and use in derivative-fragile settings.

\[

\left\|

u^\theta_{t\to r}(x)

-

\operatorname{sg}\!\left[

u_t(x\mid x_1)

+

(r-t)

\left(

u_t(x\mid x_1)\partial_x u^\theta_{t\to r}(x)

+

\partial_t u^\theta_{t\to r}(x)

\right)

\right]

\right\|^2

\]

\[

u_t(x\mid x_1)=\frac{x_1-x}{1-t},\qquad x_1\in\mathcal D .

\]

This gives direct dataset supervision, but the

\(\partial_x u^\theta\) and \(\partial_t u^\theta\) terms

introduce derivative computation and JVP-style overhead.